GSA SER Link Lists

Understanding GSA SER Link Lists

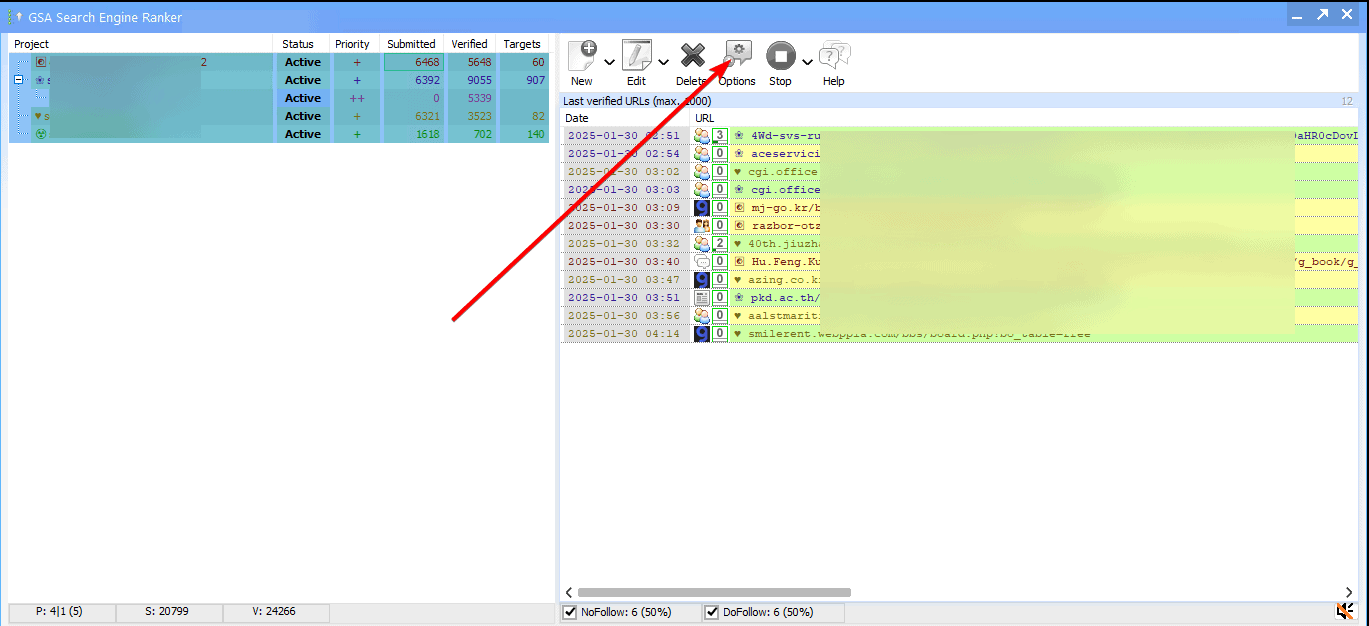

GSA Search Engine Ranker remains one of the most powerful automated link building tools available, but its true potential is unlocked through the use of high-quality GSA SER link lists. A link list is essentially a curated collection of target URLs where the software can attempt to create backlinks. Without a solid list, the application is simply a sophisticated engine with no fuel.

What Makes a Powerful GSA SER Link List

A standard installation of GSA SER includes a few generic built-in lists, but these are typically overused and yield poor results. Premium GSA SER link lists differentiate themselves by focusing on specific platform footprints, high domain authority, and low spam scores. The difference between a verified, niche-relevant list and a random scrape is the difference between a penalty and a rankings boost.

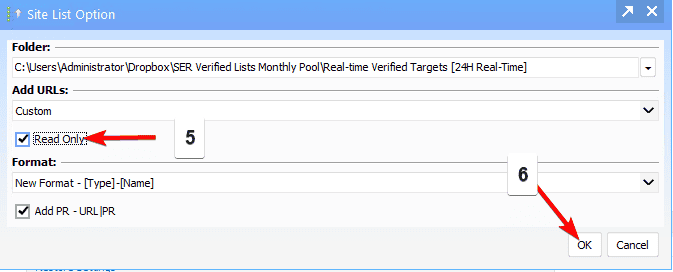

Verified vs. Unverified Targets

When sourcing GSA SER link lists, you’ll encounter two major categories. Verified lists contain URLs that have been pre-checked to ensure the registration and posting forms are still active. Unverified lists are raw scraped URLs that often lead to closed forums, dead blogs, or platforms that have updated their CMS. Using verified lists drastically reduces the "unable to identify engine" errors in your logs and improves the links-per-minute metric.

Contextual and Niche-Relevant Lists

Search engines have evolved to demand topical relevance. Generic GSA SER link lists that target everything from pharmaceutical blogs to gaming sites are no longer effective for serious tiered link building. Modern SEO requires segmented lists: guestbook lists, social bookmark lists, wiki lists, article directory lists, and web 2.0 lists. By isolating these engines and populating them using the platform identifier in GSA SER, you maintain contextual harmony, which is far safer for your money site.

Building Your Own Custom Link Lists

While buying pre-made GSA SER link lists is common, advanced users often build proprietary assets by scraping with tools like Scrapebox or Gscraper. The methodology involves using advanced search operators (inurl: powered by phpBB "post new topic", etc.) to find fresh targets. Scraping and then filtering by identification rate creates a unique "footprint" that public lists lack. If your competitors are all using the same commercial GSA SER link lists, your tier 1 properties will leave the same digital trail, reducing the value of the backlink profile.

Filtering Platforms for Success

Not every platform is worth the server resources. A highly optimized list for GSA SER will exclude Chinese, Japanese, and Russian language platforms unless those are your target markets. Furthermore, the list should be filtered against a blacklist database to remove known malware sites or URLs flagged by anti-virus vendors. Effective GSA SER link lists are not just about volume; they are about pure, functional sites that don't trigger security warnings for the person clicking a link.

Integrating Link Lists with Proxies and Captcha Services

The performance of your GSA SER link lists is intrinsically tied to your proxy quality and captcha solving service. Hitting a list of 100,000 targets with a single IP will result in an instant firewall block. To truly leverage an aggressive GSA SER link list, you need semi-dedicated or dedicated private proxies. Similarly, since many platforms on these lists are protected by complex captchas like ReCaptcha, pairing your list with a reliable 2captcha or CapMonster setup ensures that the submitter can actually penetrate the forms and post verified links to those targets.

The Role of Link Lists in Tiered Architecture

In a classic tiered link building strategy, GSA SER link lists typically power the lower tiers. Tier 1 properties (high-quality Web 2.0s) are often created manually, but Tier 2 and Tier 3 can be blasted with powerful but unmoderated lists. Using recycled, low-quality GSA SER link lists for Tier 2 can leave a massive footprint and link your Tier 1 properties to a bad neighborhood. Strategic builders curate separate, cleaner lists for Tier 2 that consist mainly of social networks and Wikis, reserving the heavy comment spam lists strictly for Tier 3.

Maintaining Freshness and Avoiding "Link Rot"

A major pitfall with static GSA SER link lists is link rot. Forums close, open redirect scripts get patched, and blogging platforms clean up spam. A list that had a 80% identification rate last month might drop to 20% this month. It is critical to consistently re-verify your lists with a URL checker to confirm HTTP status codes. Implementing an automated refresh pipeline where dead URLs are pruned and new scrapes are blended in ensures that your GSA SER link lists remain a productive, lean asset here rather than a bloated folder of 404 errors.

Ethical and Practical Considerations

While GSA SER link lists are potent, they exist in a gray area of SEO. Google’s algorithms actively target spam created by automated tools. To mitigate risk, responsible SEOs randomize anchor text profiles, stagger submission velocities, and never point automated lists directly at a primary domain. Instead, these lists should act as a support structure, pumping link juice through buffer sites. Ultimately, the quality of your GSA SER link lists dictates whether your campaign is perceived as a high-value virtual web or a simple spam attack. Smart curation is the only sustainable path forward.